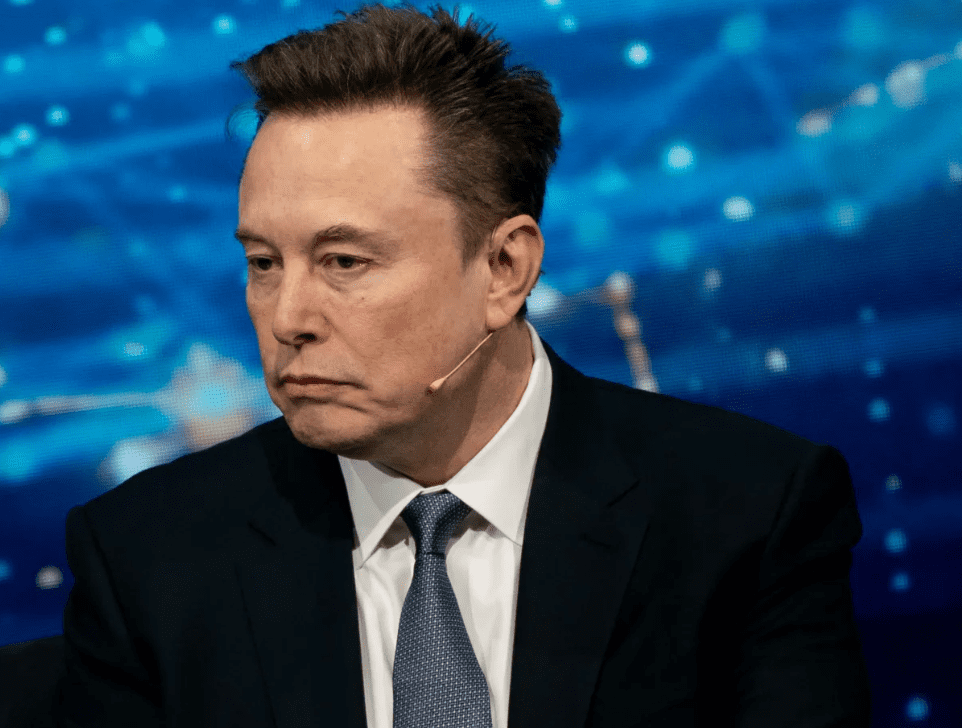

As tensions escalate across the Middle East, the battle for information is moving rapidly online. Now, Elon Musk’s social media platform X has launched a dramatic crackdown on AI-generated war footage, warning creators that misleading content could lead to severe penalties.

The platform announced new restrictions targeting unmarked AI-generated videos related to the ongoing US–Iran conflict. Under the new rules, creators who fail to disclose artificial content could lose access to revenue sharing for three months, with repeat offenders facing permanent removal from the program.

The move highlights growing concerns about how artificial intelligence can shape public perception during international conflicts. With realistic AI video technology becoming easier to use, experts fear that misinformation could spread faster than verified reporting.

As the conflict intensifies, the platform is attempting to restore trust while preventing manipulated content from influencing global audiences.

X Introduces Strict Rules on AI-Generated War Content

The new policy specifically targets creators who publish AI-generated videos depicting armed conflict without clearly labeling them as artificial.

According to X leadership, such content can easily mislead viewers, especially during fast-moving geopolitical crises when accurate information is critical.

The platform’s head of product, Nikita Bier, announced the changes in a public statement explaining that the policy aims to protect the authenticity of information shared on the platform.

He emphasized that AI tools now allow users to create highly convincing war footage in minutes. Without clear disclosure, these videos can easily appear real and confuse audiences.

Because of this risk, X will immediately suspend creators from the revenue sharing program if they post undisclosed AI-generated war videos.

The suspension will last for 90 days.

If a user repeats the violation, they could be permanently banned from earning money through the program.

Why AI War Videos Are a Growing Problem

Artificial intelligence has rapidly transformed how content is created online.

Modern generative tools can produce videos that simulate explosions, missile strikes, military aircraft, and battlefield scenes with stunning realism.

During conflicts, such content spreads quickly on social media platforms where users search for updates and visual confirmation of events.

However, when AI videos circulate without disclosure, viewers may mistake fictional scenes for real military footage.

This creates a dangerous environment where misinformation can influence public opinion, spark panic, or even escalate political tensions.

For this reason, the issue of AI-generated war content has become a major concern for governments, journalists, and technology companies alike.

How X Plans to Detect AI-Generated Content

To enforce the new rules, the platform will rely on several methods to identify artificial videos.

According to the company, suspicious content may be flagged through metadata embedded in files generated by AI tools. These technical markers often reveal whether a video was produced using generative software.

Additionally, the platform will use its Community Notes system to highlight misleading or manipulated content.

Community Notes allows users to collaboratively add context to posts that may contain false or misleading information.

If AI-generated videos are detected without disclosure, they may receive warnings and be flagged publicly.

This system aims to increase transparency while allowing viewers to better understand the origin of viral videos.

The Role of AI on Elon Musk’s Platform

Ironically, the crackdown comes despite X previously embracing artificial intelligence as a core feature of the platform.

Since 2023, the platform has integrated its own AI chatbot known as Grok, designed to answer questions and interact with users in real time.

The company has repeatedly promoted AI innovation and has encouraged developers to build new tools using the technology.

However, the rapid growth of generative AI has also revealed serious risks, especially during global crises.

In times of conflict, realistic synthetic media can easily blur the line between verified reporting and fabricated scenes.

The new policy reflects the platform’s attempt to balance AI innovation with responsible information management.

The US–Iran Conflict Driving the Policy Change

The policy announcement comes during a dramatic escalation in the ongoing conflict between the United States, Israel, and Iran.

Recent military operations in the region have triggered missile strikes, drone attacks, and retaliatory actions across multiple Middle Eastern countries.

The conflict intensified after US and Israeli forces launched major strikes targeting Iran’s missile infrastructure.

The attacks reportedly killed Iran’s Supreme Leader Ayatollah Ali Khamenei during missile strikes around Tehran.

In response, Iran launched its own attacks on several US allied states in the region.

Countries affected by retaliatory strikes include Israel, Qatar, the United Arab Emirates, Bahrain, and Kuwait.

Videos showing explosions, missile launches, and damage across cities have flooded social media platforms since the escalation began.

Some of this content comes from eyewitness recordings.

However, experts warn that a growing portion of viral clips may be AI-generated simulations.

This is exactly the type of content the new policy is designed to address.

Social Media as the New Battlefield

In modern conflicts, the struggle for control of information often plays out alongside military operations.

Social media platforms have become primary sources of news for millions of people worldwide.

While this allows rapid access to updates, it also creates opportunities for misinformation to spread quickly.

AI-generated videos can amplify this problem by presenting fictional events as real.

False footage can mislead audiences, influence political narratives, or manipulate public reactions to global crises.

Technology companies now face increasing pressure to prevent such scenarios while maintaining open communication on their platforms.

The latest policy from X reflects the growing recognition that digital misinformation can carry serious consequences.

Can Platforms Really Control AI Misinformation?

Despite new rules and detection tools, controlling AI-generated misinformation remains a major challenge.

Generative technology continues to improve rapidly, making artificial videos more realistic and harder to identify.

At the same time, millions of users upload new content every day, making moderation extremely complex.

Some experts argue that stronger verification systems and clearer labeling standards will be necessary across the entire social media industry.

Others believe education will play a crucial role.

Users must learn to question viral content and verify sources before accepting dramatic footage as genuine.

In the coming years, the fight against AI misinformation is likely to become one of the defining challenges for technology platforms.

A New Era of Digital Conflict

The crackdown on AI-generated war videos signals how dramatically the information landscape has changed.

Modern wars are no longer fought only with missiles and drones.

They are also fought through images, videos, and narratives shared online.

Platforms like X now find themselves on the front line of that digital battlefield.

By enforcing stricter rules on AI-generated content, the company hopes to maintain trust during moments when accurate information matters most.

Whether these measures will be enough remains to be seen.

But one thing is clear.

As artificial intelligence continues to reshape media, the battle against misinformation will become just as important as the conflicts unfolding on the ground.

Comments are closed.